Keynote

How can retrieval aid long-form text generation?

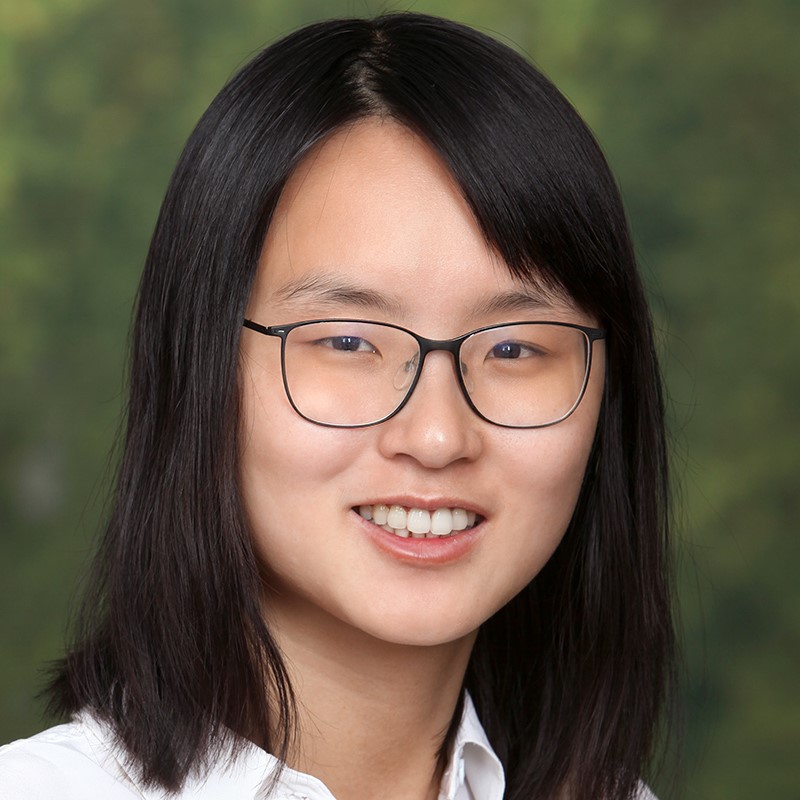

Mohit Iyyer

Large language models (LLMs) encode huge amounts of knowledge about the world into their parameters. However, this parametric knowledge is often insufficient to generate accurate and specific information about arbitrary user-selected topics. The emerging area of retrieval-augmented language models thus aims to give LLMs access to external data, usually in the form of a vector database. There are many ways to integrate retrieved data into an LLM for text generation, from late-fusion inference-only methods such as kNN-LM to end-to-end trainable systems such as RETRO. How well do these methods improve long-form text generation, in which a model must generate paragraph-length responses to user queries? In this talk, I first provide an overview of the modeling and evaluation challenges associated with retrieval-augmented long-form question answering. Next, I show that methods such as the kNN-LM may not actually improve the generation quality of language models, again highlighting the challenge of evaluation for future research. Finally, I switch gears and discuss another usage of retrieval entirely: detecting whether a piece of long-form text has been generated by an LLM.

Mohit Iyyer is an associate professor in computer science at the University of Massachusetts Amherst. His research focuses broadly on designing machine learning models for long-form language generation (e.g., for story generation and machine translation), and his group also works on tasks involving creative language understanding (e.g., modeling fictional narratives and characters). He is the recipient of best paper awards at NAACL (2016, 2018), an outstanding paper at EACL 2023, and a best demo award at NeurIPS 2015, and he received the 2022 Samsung AI Researcher of the Year award. He received his PhD in computer science from the University of Maryland, College Park in 2017 and spent the following year as a researcher at the Allen Institute for Artificial Intelligence.